Apple 7B Model Chat Template

Apple 7B Model Chat Template - This project is heavily inspired. Cache import load_prompt_cache , make_prompt_cache , save_prompt_cache Upload images, audio, and videos by dragging in the text input, pasting, or clicking here. Chat templates are part of the tokenizer for text. This is the reason we added chat templates as a feature. From mlx_lm import generate , load from mlx_lm. Im trying to use a template to predictably receive chat output, basically just the ai to fill. They also focus the model's learning on relevant aspects of the data. Customize the chatbot's tone and expertise by editing the create_prompt_template function. To shed some light on this, i've created an interesting project: Subreddit to discuss about llama, the large language model created by meta ai. Essentially, we build the tokenizer and the model with from_pretrained method, and we use generate method to perform chatting with the help of chat template provided by the tokenizer. They also focus the model's learning on relevant aspects of the data. Im trying to use a template to predictably receive chat output, basically just the ai to fill. Much like tokenization, different models expect very different input formats for chat. This project is heavily inspired. This is the reason we added chat templates as a feature. We compared mistral 7b to. I am quite new to finetuning and have been planning to finetune the mistral 7b model on the shp dataset. Chat templates are part of the tokenizer for text. To shed some light on this, i've created an interesting project: Upload images, audio, and videos by dragging in the text input, pasting, or clicking here. Cache import load_prompt_cache , make_prompt_cache , save_prompt_cache They also focus the model's learning on relevant aspects of the data. Customize the chatbot's tone and expertise by editing the create_prompt_template function. This is the reason we added chat templates as a feature. They also focus the model's learning on relevant aspects of the data. This project is heavily inspired. Cache import load_prompt_cache , make_prompt_cache , save_prompt_cache Upload images, audio, and videos by dragging in the text input, pasting, or clicking here. Chat templates are part of the tokenizer. From mlx_lm import generate , load from mlx_lm. Essentially, we build the tokenizer and the model with from_pretrained method, and we use generate method to perform chatting with the help of chat template provided by the tokenizer. We compared mistral 7b to. This is the reason we added chat templates as a feature. I am quite new to finetuning and have been planning to finetune the mistral 7b model on the shp dataset. Essentially, we build the tokenizer and the model with from_pretrained method, and we use generate method to perform chatting with the help of chat template provided by the tokenizer. Subreddit to discuss about llama, the large language model created by. They specify how to convert conversations, represented as lists of messages, into a single tokenizable string in the format that the model expects. Essentially, we build the tokenizer and the model with from_pretrained method, and we use generate method to perform chatting with the help of chat template provided by the tokenizer. To shed some light on this, i've created. This is the reason we added chat templates as a feature. Upload images, audio, and videos by dragging in the text input, pasting, or clicking here. Im trying to use a template to predictably receive chat output, basically just the ai to fill. Much like tokenization, different models expect very different input formats for chat. Chat templates are part of. They also focus the model's learning on relevant aspects of the data. Chat templates are part of the tokenizer for text. Chat templates are part of the tokenizer. We compared mistral 7b to. They specify how to convert conversations, represented as lists of messages, into a single tokenizable string in the format that the model expects. Cache import load_prompt_cache , make_prompt_cache , save_prompt_cache Essentially, we build the tokenizer and the model with from_pretrained method, and we use generate method to perform chatting with the help of chat template provided by the tokenizer. To shed some light on this, i've created an interesting project: This is a repository that includes proper chat templates (or input formats) for. Customize the chatbot's tone and expertise by editing the create_prompt_template function. Geitje comes with an ollama template that you can use: Cache import load_prompt_cache , make_prompt_cache , save_prompt_cache This project is heavily inspired. This is the reason we added chat templates as a feature. I am quite new to finetuning and have been planning to finetune the mistral 7b model on the shp dataset. Geitje comes with an ollama template that you can use: Customize the chatbot's tone and expertise by editing the create_prompt_template function. We compared mistral 7b to. They also focus the model's learning on relevant aspects of the data. We compared mistral 7b to. This is the reason we added chat templates as a feature. Chat templates are part of the tokenizer for text. Cache import load_prompt_cache , make_prompt_cache , save_prompt_cache From mlx_lm import generate , load from mlx_lm. Essentially, we build the tokenizer and the model with from_pretrained method, and we use generate method to perform chatting with the help of chat template provided by the tokenizer. This project is heavily inspired. I am quite new to finetuning and have been planning to finetune the mistral 7b model on the shp dataset. Customize the chatbot's tone and expertise by editing the create_prompt_template function. Geitje comes with an ollama template that you can use: Chat templates are part of the tokenizer. To shed some light on this, i've created an interesting project: They also focus the model's learning on relevant aspects of the data. Subreddit to discuss about llama, the large language model created by meta ai. This is a repository that includes proper chat templates (or input formats) for large language models (llms), to support transformers 's chat_template feature. Upload images, audio, and videos by dragging in the text input, pasting, or clicking here.Pedro Cuenca on Twitter "Llama 2 has been released today, and of

Neuralchat7b Can Intel's Model Beat GPT4?

MPT7B A Free OpenSource Large Language Model (LLM) Be on the Right

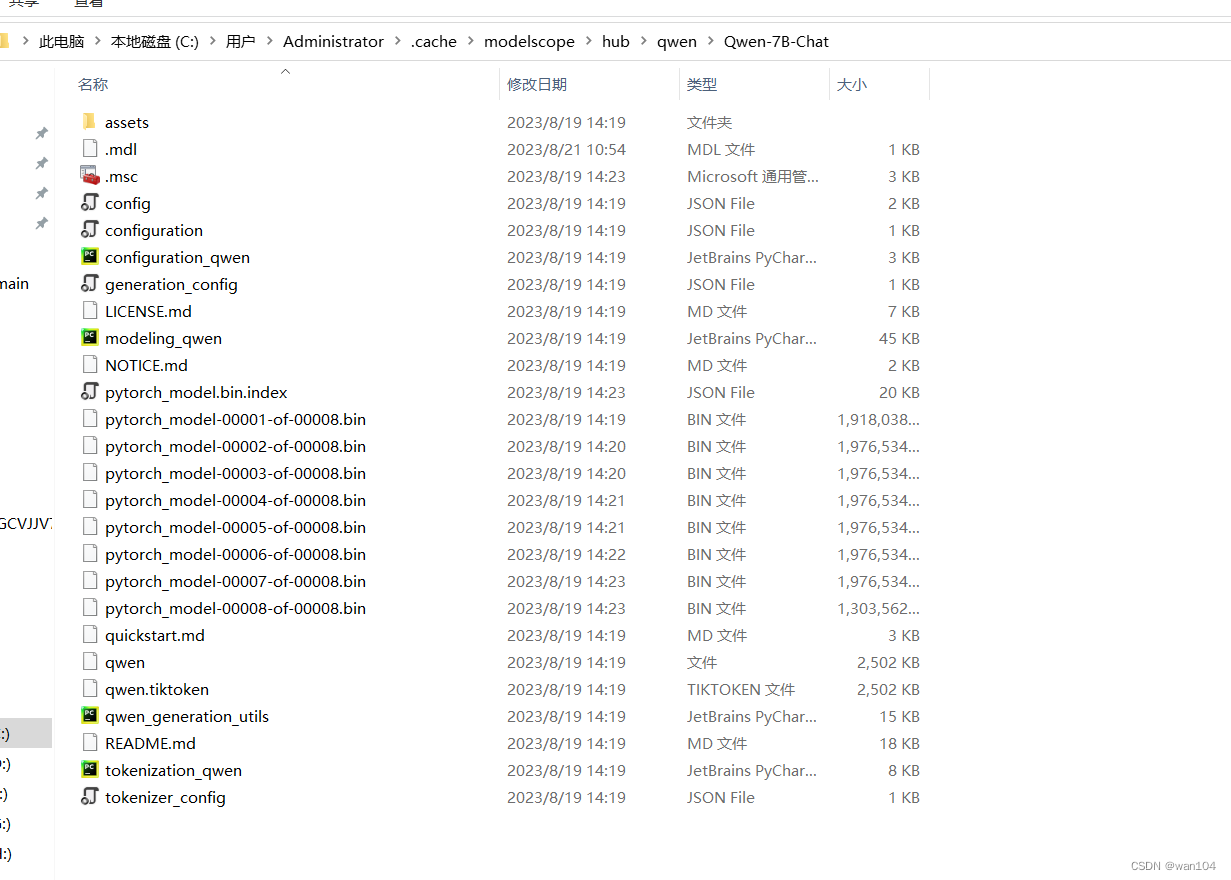

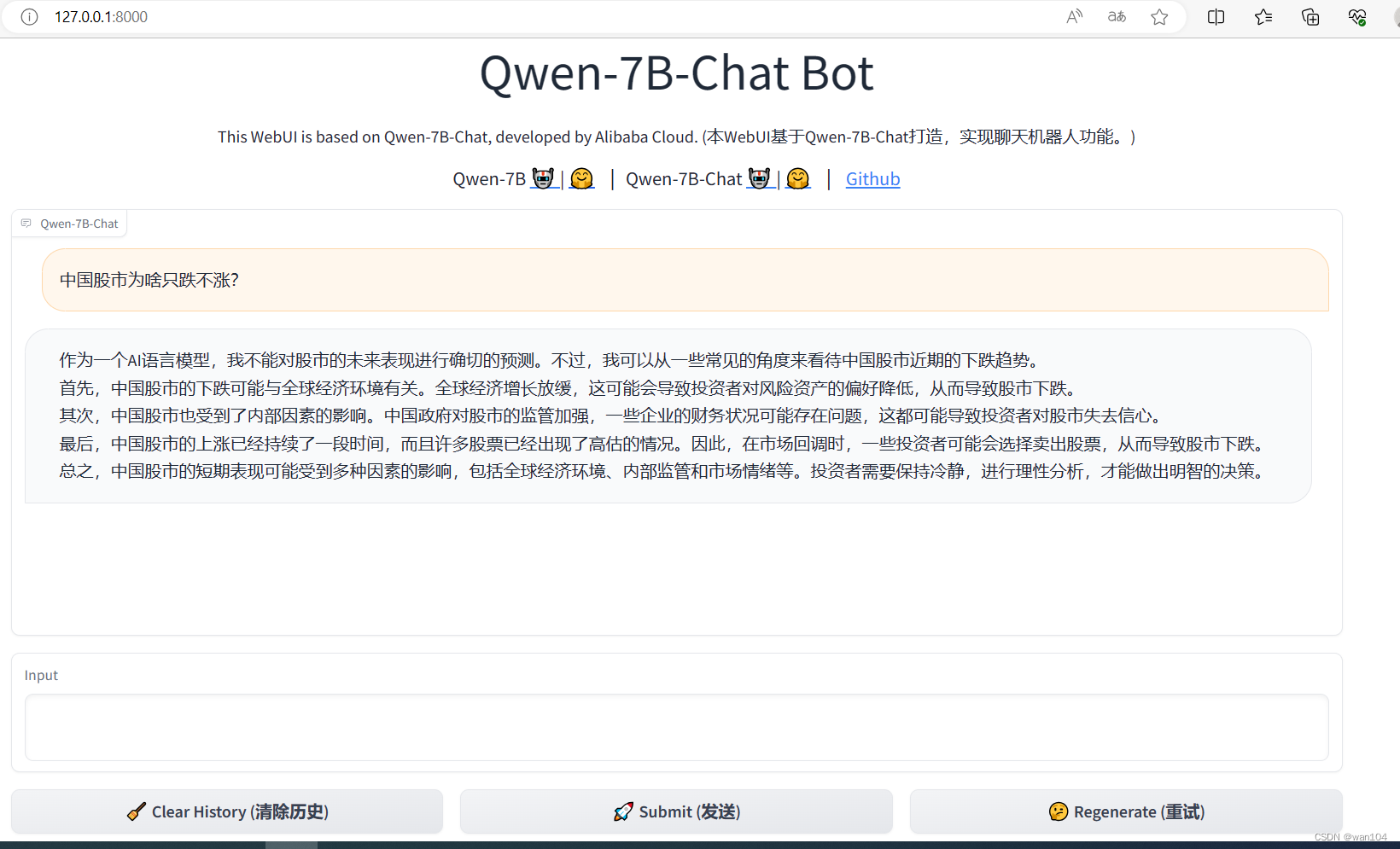

通义千问7B和7Bchat模型本地部署复现成功_通义千问 githubCSDN博客

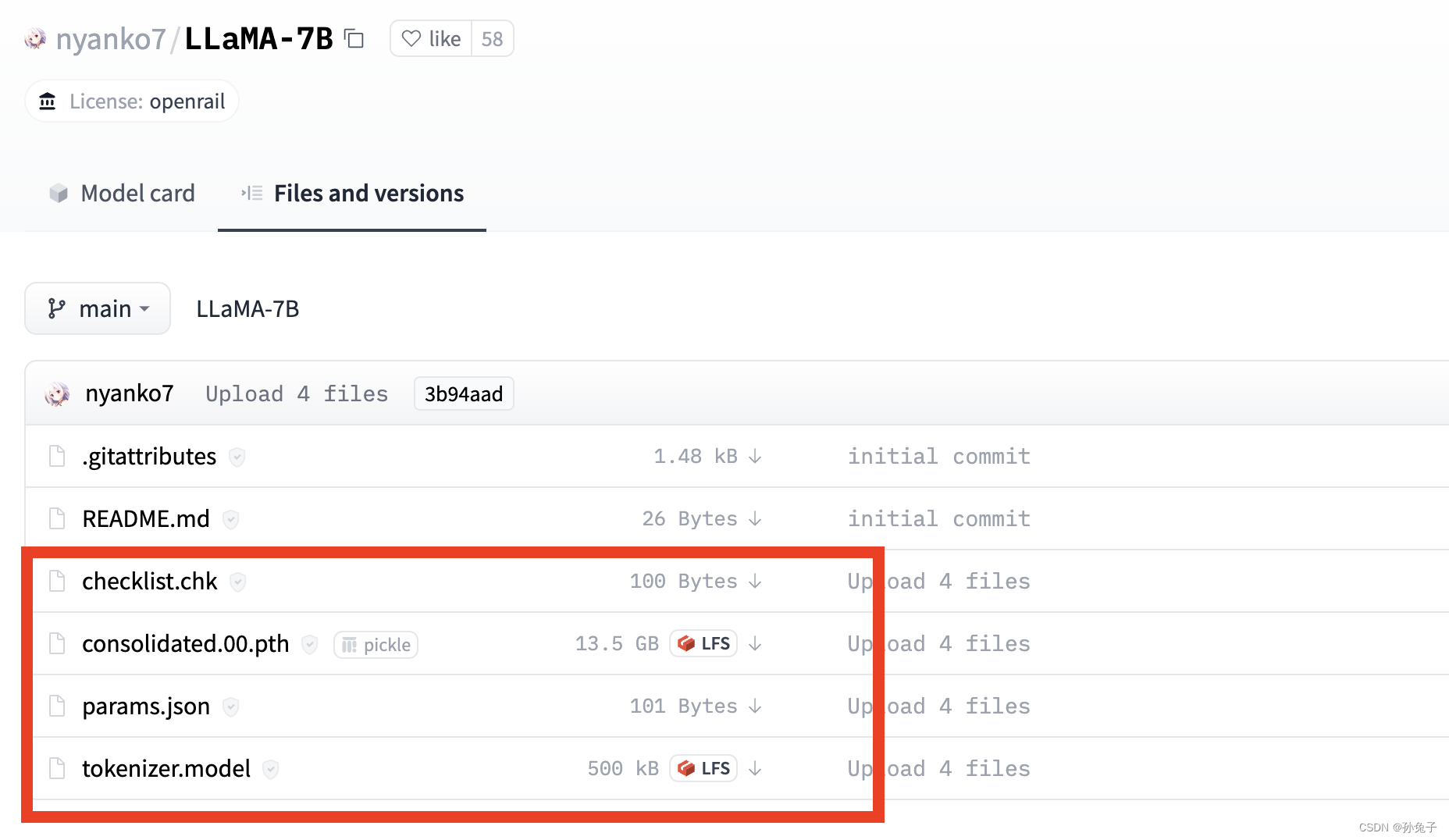

GitHub DecXx/Llama27bdemo This Is demonstrates model [Llama27b

huggyllama/llama7b · Add chat_template so that it can be used for chat

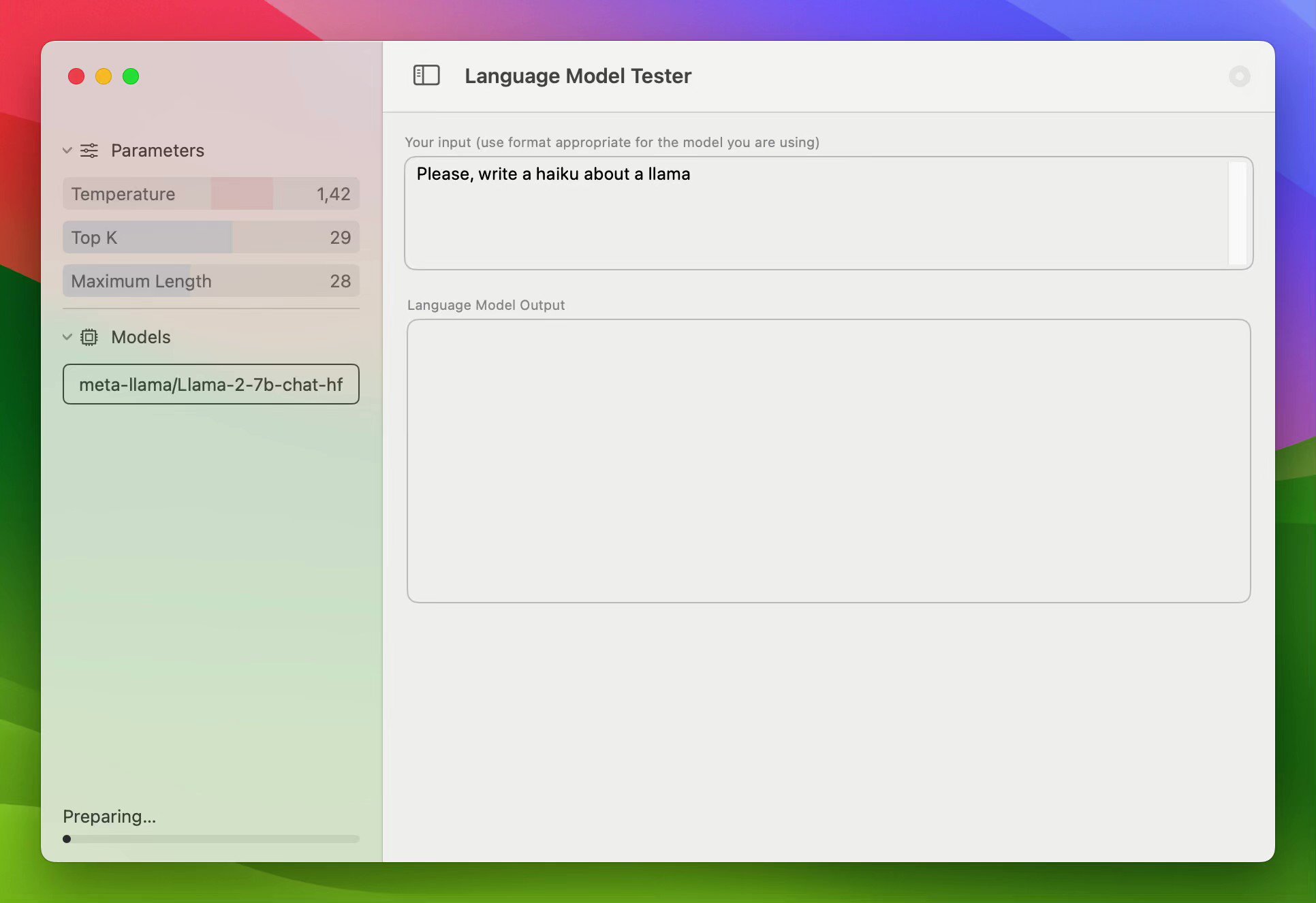

Mac pro M2 “本地部署chatGPT”_mac m2本地运行qwen7bchatCSDN博客

通义千问7B和7Bchat模型本地部署复现成功_通义千问 githubCSDN博客

AI for Groups Build a MultiUser Chat Assistant Using 7BClass Models

Unlock the Power of AI Conversations Chat with Any 7B Model from

Much Like Tokenization, Different Models Expect Very Different Input Formats For Chat.

They Specify How To Convert Conversations, Represented As Lists Of Messages, Into A Single Tokenizable String In The Format That The Model Expects.

Chat With Your Favourite Models And Data Securely.

Im Trying To Use A Template To Predictably Receive Chat Output, Basically Just The Ai To Fill.

Related Post: