Llama 31 8B Instruct Template Ooba

Llama 31 8B Instruct Template Ooba - Llama 3.1 comes in three sizes: This page covers capabilities and guidance specific to the models released with llama 3.2: I still get answers like this: Here's instructions for anybody else who needs to set the instruction template correctly in oobabooga: You don't touch the instruction template at all, because the model loader. Putting <|eot_id|>, <|end_of_text|> in custom stopping strings doesn't change anything. How do i use custom llm templates with the api? When you receive a tool call response, use the output to format an answer to the orginal. How do i specify the chat template and format the api calls. Currently i managed to run it but when answering it falls into endless loop until. Currently i managed to run it but when answering it falls into endless loop until. Here's instructions for anybody else who needs to set the instruction template correctly in oobabooga: Putting <|eot_id|>, <|end_of_text|> in custom stopping strings doesn't change anything. How do i specify the chat template and format the api calls. I still get answers like this: I tried my best to piece together correct prompt template (i originally included links to sources but reddit did not like the lings for some reason). Llama is a large language model developed by. The llama 3.2 quantized models (1b/3b), the llama 3.2 lightweight models (1b/3b) and the llama. Use with transformers you can run. How do i use custom llm templates with the api? Putting <|eot_id|>, <|end_of_text|> in custom stopping strings doesn't change anything. Use with transformers you can run. How do i specify the chat template and format the api calls. How do i use custom llm templates with the api? When you receive a tool call response, use the output to format an answer to the orginal. Use with transformers you can run. Here's instructions for anybody else who needs to set the instruction template correctly in oobabooga: This page covers capabilities and guidance specific to the models released with llama 3.2: Llama 3 instruct special tokens used with llama 3. A prompt should contain a single system message, can contain multiple alternating user and assistant messages,. This page covers capabilities and guidance specific to the models released with llama 3.2: Llama 3 instruct special tokens used with llama 3. Llama 3.1 comes in three sizes: I tried my best to piece together correct prompt template (i originally included links to sources but reddit did not like the lings for some reason). I still get answers like. When you receive a tool call response, use the output to format an answer to the orginal. Currently i managed to run it but when answering it falls into endless loop until. Putting <|eot_id|>, <|end_of_text|> in custom stopping strings doesn't change anything. A prompt should contain a single system message, can contain multiple alternating user and assistant messages, and always. A prompt should contain a single system message, can contain multiple alternating user and assistant messages, and always ends with. Llama is a large language model developed by. Currently i managed to run it but when answering it falls into endless loop until. When you receive a tool call response, use the output to format an answer to the orginal.. Llama 3.1 comes in three sizes: How do i use custom llm templates with the api? You don't touch the instruction template at all, because the model loader. Here's instructions for anybody else who needs to set the instruction template correctly in oobabooga: Llama is a large language model developed by. Llama 3 instruct special tokens used with llama 3. How do i specify the chat template and format the api calls. The llama 3.2 quantized models (1b/3b), the llama 3.2 lightweight models (1b/3b) and the llama. Llama is a large language model developed by. You don't touch the instruction template at all, because the model loader. How do i specify the chat template and format the api calls. Here's instructions for anybody else who needs to set the instruction template correctly in oobabooga: I wrote the following instruction template which. I still get answers like this: Llama 3 instruct special tokens used with llama 3. A prompt should contain a single system message, can contain multiple alternating user and assistant messages, and always ends with. Putting <|eot_id|>, <|end_of_text|> in custom stopping strings doesn't change anything. I tried my best to piece together correct prompt template (i originally included links to sources but reddit did not like the lings for some reason). Here's instructions for anybody. I wrote the following instruction template which. I have it up and running with a front end. When you receive a tool call response, use the output to format an answer to the orginal. Llama 3 instruct special tokens used with llama 3. Llama 3.1 comes in three sizes: I still get answers like this: Currently i managed to run it but when answering it falls into endless loop until. When you receive a tool call response, use the output to format an answer to the orginal. Use with transformers you can run. This page covers capabilities and guidance specific to the models released with llama 3.2: I have it up and running with a front end. Putting <|eot_id|>, <|end_of_text|> in custom stopping strings doesn't change anything. When you receive a tool call response, use the output to format an answer to the orginal. How do i specify the chat template and format the api calls. I tried my best to piece together correct prompt template (i originally included links to sources but reddit did not like the lings for some reason). Llama 3 instruct special tokens used with llama 3. When you receive a tool call response, use the output to format an answer to the orginal. The llama 3.2 quantized models (1b/3b), the llama 3.2 lightweight models (1b/3b) and the llama. Here's instructions for anybody else who needs to set the instruction template correctly in oobabooga: Llama is a large language model developed by. Llama 3.1 comes in three sizes:unsloth/llama38bInstructbnb4bit · Hugging Face

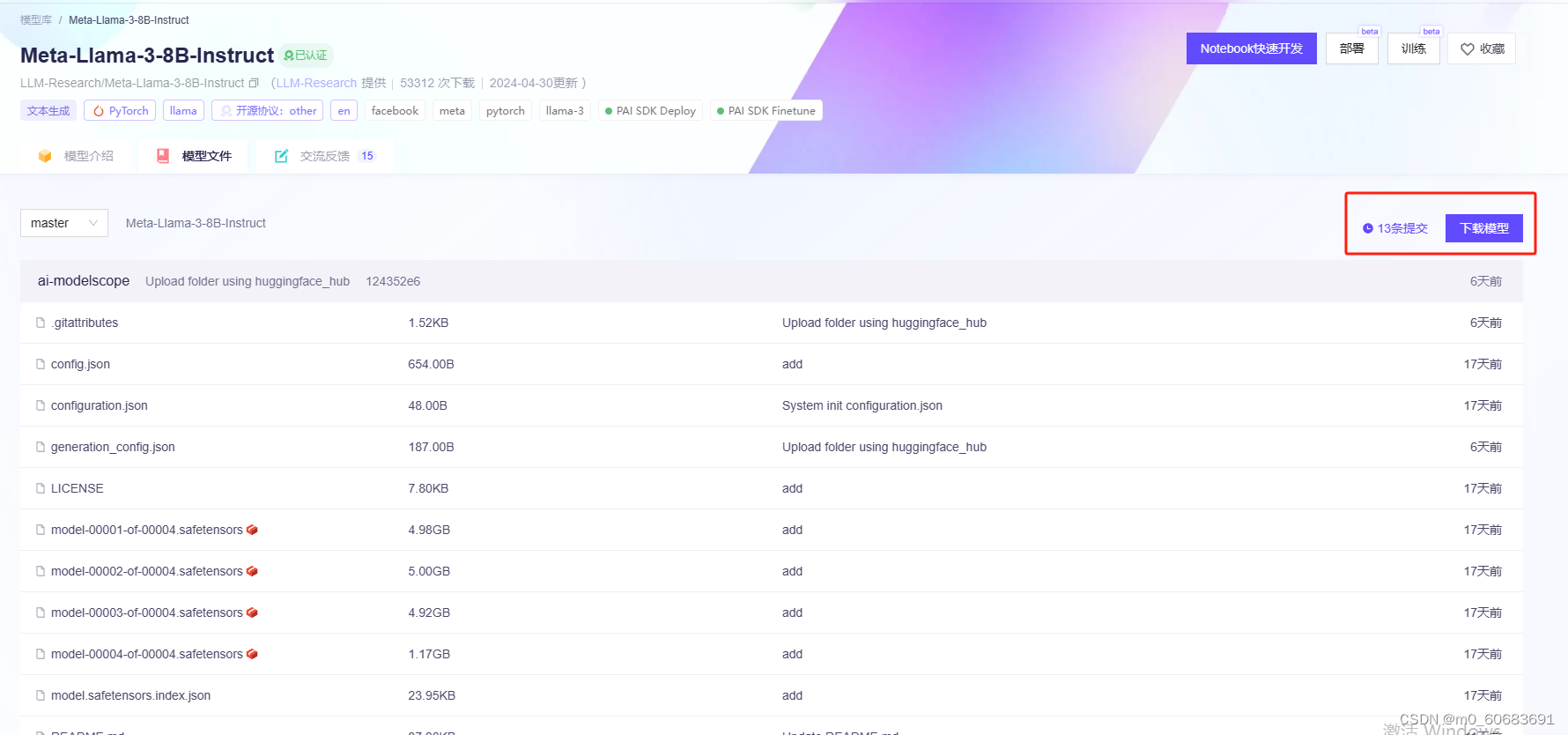

metallama/MetaLlama38BInstruct · Where can I get a config.json

Junrulu/Llama38BInstructIterativeSamPO · Hugging Face

META LLAMA 3 8B INSTRUCT LLM How to Create Medical Chatbot with

Llama 3 Swallow 8B Instruct V0.1 a Hugging Face Space by alfredplpl

Llama 3 8B Instruct Model library

anguia001/MetaLlama38BInstruct at main

Meta Llama 3.1 8B Instruct By metallama Benchmarks, Features and

教程:利用LLaMA_Factory微调llama38b大模型_llama3模型微调保存_llama38binstruct下载CSDN博客

Manage Access models/llama38binstruct

How Do I Use Custom Llm Templates With The Api?

I Wrote The Following Instruction Template Which.

A Prompt Should Contain A Single System Message, Can Contain Multiple Alternating User And Assistant Messages, And Always Ends With.

You Don't Touch The Instruction Template At All, Because The Model Loader.

Related Post: